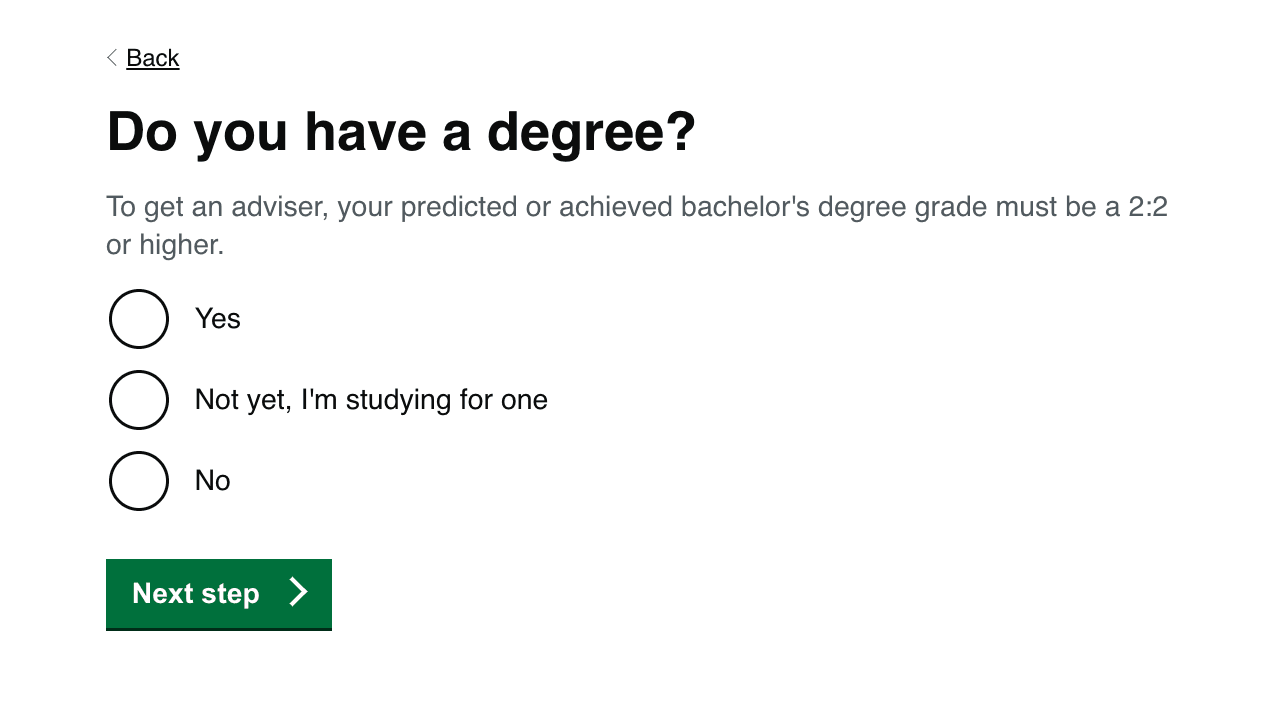

We made a slight update to our two main journeys on the Get Into Teaching site. We clarified that when we asked about degrees, we meant bachelor's degrees. This question is asked in similar format in both journeys.

Why we’re doing this

Many of the teacher training candidates that we describe as ‘non-UK’ are actually in the UK when they interact with our service. It’s pretty common for people to be studying for a post-grad qualification in the UK and exploring their career options for after they finish their studies.

A lot of people decide to sign up to get the help of our popular teaching advisers. As part of this sign-up we ask them where their degree is from. For whatever reason, many answer ‘the UK’ when we later find out this isn’t true. Although there could be other reasons for misrepresenting where their degree is from, we believe one of them could be that they do this because they are currently studying at graduate level in the UK. The candidates might think they are answering in the best way possible by saying they have a degree from the UK.

We need to get accurate information about where their initial degree was from because it will matter when they come to apply for teacher training. Not all degree courses are valued equally, so it’s better if we can assess their qualifications at an early stage. This helps us give them appropriate advice and support without delay.

There’s a wider issue at the moment about trying to manage expectations for non-UK users as early as possible. If a candidate isn’t going to be suitable, we want to let them know as early as possible so they don’t get too invested in a process that isn’t going to work out for them.

What we did

We did some basic research into what degrees are called around the world. Most anglophone countries seem to call them ‘bachelor’s degrees’. We agreed content with advisers and decided to make updates to several key pages in our 2 main journeys: the ‘routes into teaching’ (routes wizard) and adviser sign-up journey.

As part of this change, we also removed the explanation of what answer the candidate needed to give in order to get an adviser. This seemed to be tempting the user to give the 'correct' answer rather than an accurate one.

Analysing the change

We wanted to see whether we were gathering better data as part of this change. It was pretty hard to see how we could be certain that candidates were answering more accurately, when we get a very varied set of users coming through these journeys.

One complication was the fact that we were also rebranding the service at a very similar time to the change being made. This meant we were trying to attract users to our journeys using different kinds of messaging, so we might be getting a new audience with different motivations. It didn't seem like we would get good results if we didn't take this isn't account when we analysed the change to the main journeys on our site.

We decided that using split testing was probably the best way to analyse the performance. Half of users would be asked about their degree, and half would be asked about their bachelor's degree.